The majority of the time, it makes sense to ensure that you are well-informed before making a decision. This is especially true for important choices; while it may be acceptable to just claim you have read the terms and conditions of a new website, we are not likely to be that careless with our health or wealth.

And, of course, people can make bad decisions based on insufficient or false information, as we have seen in the cases of the U.S. 2020 election and the anti-vaxxer movement.

But may having too much knowledge make us make poorer choices instead of better ones? According to a study by Min Zheng and colleagues that was published in Cognitive Research: Principles and Implications, that might occasionally be the case.

Advances in machine learning, particularly those related to models of cause and effect, have improved the knowledge available to feed into decision-making and. These algorithms can identify prospective outcomes likely to happen from specific actions or inputs.

However, it’s not obvious how helpful such knowledge is when it comes to human decision-making. It may not be enough to just provide individuals with causal knowledge to aid in their decision-making; as co-author Samantha Kleinberg puts it, “being accurate is not enough for information to be valuable.”We don’t know, in particular, how this information might interact with what people already know and believe.”

The researchers first experiment looked at the role that causal knowledge plays in the judgments we make daily. The team asked 1,718 participants for recommendations on how they would handle a real-world event before presenting it to them. When asked what one thing a university freshman named Jane should do to achieve her goal—going for a half-hour walk every weekend, eating a healthy diet, avoiding seeing her friends, or watching less TV—the participants were informed that she wanted to avoid gaining weight while still having fun with her friends.

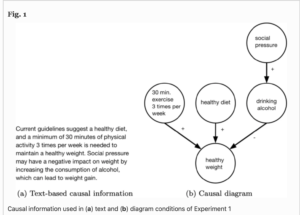

The participants were divided into three groups: those who received no additional information, those who received a text-based causal explanation of the relationship between diet and regular exercise and weight loss, and those who received a straightforward causal graphic. The researchers also questioned those in the last two categories if the causal explanations had been useful or had an impact on their response.

In the absence of information, participants were remarkably accurate: 88.8% correctly identified the response (i.e. that Jane should maintain a healthy diet). However, individuals in the two groups given causal information scored worse: 82.7% of those in the text-based explanation condition and 80.1% in the diagram condition correctly identified the correct response.

The second trial used a similar methodology, but this time the scenario was the management of type 2 diabetes, which only some participants would have had prior experience with. The greatest way for someone with diabetes to control their condition would be to act normally, exercise more, choose a healthier dinner, and go cycling, the participants were asked. They either received no information or a schematic illustrating the effects of regular exercise and a balanced diet.

Participants with and without type 2 diabetes made the correct decision—picking a nutritious dinner and going for a bike ride—at roughly the same rate when no causal information was provided. For individuals without personal experience with diabetes, additional causal information improved accuracy, raising it to 86.6%; however, when type 2 diabetes patients were provided causal knowledge, accuracy fell to barely 50%.

This, combined with the outcomes of the first experiment, demonstrates that causal diagrams are not intrinsically useless; rather, whether or not they can help us make better decisions depends on our individual experiences. When faced with a fresh circumstance, causal information might be helpful, but when we are previously familiar with it, it can make us make worse decisions.

The researchers believe that our pre-existing mental models are an influencing factor. They say “our decisions are influenced by our past experiences and ideas rather than the causal information itself. With further information, we may lose confidence and begin doubting our decisions, which can occasionally indicate that we didn’t make the best decision. “

These conclusions were supported by the last experiment, which showed that participants could use causal information to make accurate decisions in made-up situations like a question about alien mind reading even when they had personal experience with the topic. According to one of the researchers, Kleinberg, “In circumstances when people lack background knowledge, they grow more confident with the new information and make better decisions.”

The researchers also concluded the following: “Our ability to automatically extract causes from data is growing at a rapid pace, but little is known about whether causal information leads to better decisions in reality. Using large-scale online studies, we found that when people have some experience in a domain or believe they know about it, such as in the familiar domains of weight management and personal finance, causal information led to worse decisions with lower confidence. In contrast, individuals without diabetes-related experience made more accurate decisions in which they were more confident when given causal models. Future work is needed to better elicit an individual’s prior knowledge and to potentially personalize the information presented depending on their knowledge and the decision at hand. We found that text vs. graphical models representing causality can have different effects depending on the complexity of the model, suggesting a method is needed to quantify the utility of a given causal representation.”

Kleinberg goes on to comment on the impact of machine learning on our decision-making process: “This is not to say that the data that machine learning uncovers isn’t valuable; quite the opposite. However, the most effective strategy to convey information to others may be to recognize what people already believe and then adjust what we communicate to them accordingly.”